Gay Composite Portraits? American Scientists Develop Algorithms That Trace Homosexuality in the Face (Raul Gschrey)

Composite screening is back again… For a study conducted at Stanford University, USA, two scientists, Michal Kosinski and Yilun Wang, have developed an algorithm that aims to detect the sexual orientation of individuals in their facial appearance. The scientists draw on pictures from a dating website and claim that their big-data experiment reveals the homosexual orientation of men with a certainty of 81%, that of women with 74% by means of their special facial recognition and matching software. The deep neural networks (DNN) adopted by artificial intelligence (AI) would excel at recognizing patterns in large unstructured data in order to make predictions. The results of the AI, they argue, were more reliable than the human brain and revealed the limits of human perception. The authors conclude that sexual orientation might be pre-natal (probably inherited) and that this inner disposition is shown in the outer facial appearance. Here we are back again in Francis Galton’s world: In a revived version of prejudice-entrenched nineteenth-century scientific positivism.

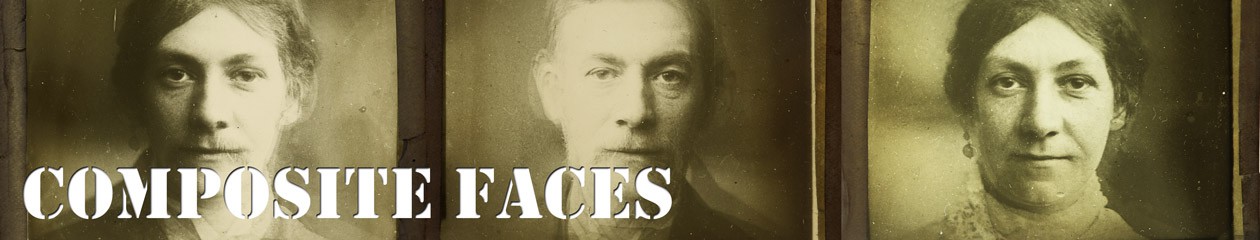

Wang, Yilun; Kosinski, Michal: “Composite faces and the average facial landmarks built by averaging faces classified as most and least likely to be gay.” In: Wang, Yilun; Kosinski, Michal: “Deep Neural Networks Can Detect Sexual Orientation From Faces.” Forthcoming in Journal of Personality and Social Psychology. [https://osf.io/zn79k]

And here the whole endeavor becomes most problematic, the scientist have chosen to publish composite portraits of male and female – gay and straight ‘faces’, showing “the average landmark locations and aggregate appearance of the faces classified as most and least likely to be gay.” And this visual data is in a second step used to classify the outer appearance of homosexual people. These remarks sound just like an excerpt from Lombroso’s or Galton’s work, who are not only known as father figures of the racist and ‘pseudo-scientific’ fields of criminal anthropology and eugenics, but also pioneered the technique of composite portraiture:

“Average landmark locations revealed that gay men had narrower jaws and longer noses, while lesbians had larger jaws. Composite faces suggest that gay men had larger foreheads than heterosexual men, while lesbians had smaller foreheads than heterosexual women.”[1]

In their article Kosinski and Wang mention the long problematic (scientific) history of physiognomy, but argue that, despite all taboos, scientific evidence suggested such a link. In the case of the visual signs for specific sexual orientations, they point to hormonal theories and genetic dispositions, but also social factors; or ‘nature and nurture’ as it is referred to in the report, an expression coined by Sir Francis Galton himself. And this inconsiderate approach to scientific theories, techniques and terminology of the past seems to characterize their study, such as the application of the term ‘race’ in relation to ethnic diversity.

As sort of a disclaimer, ethical issues and privacy concerns are discussed and the authors warn that government and private agencies were already involved with identifying face-based classifiers that are aimed at detecting intimate traits. While Kosinski and Wang argue that their findings could alert the public, rather than providing evidence against a minority group, the thoughtless and (historically) uncritical publication of a visually strong and potentially derogative composite portrait is highly questionable and might be dangerous. This is attested by a number of newspaper articles that present short and oversimplified summaries of the findings and often use the ‘gay composite’ as a visual anchor.[2] Some are thinking the approach further and warn of algorithms that could detect psychological disposition and political inclination in the face,[3] while other journalists focus on the criticism from LGBT groups.[4]

[1] Wang, Yilun; Kosinski, Michal: “Deep Neural Networks Can Detect Sexual Orientation From Faces.” Forthcoming in Journal of Personality and Social Psychology. [https://osf.io/zn79k]

[2] See: The Telegraph, 8.9.2017 [http://www.telegraph.co.uk/technology/2017/09/08/ai-can-tell-people-gay-straight-one-photo-face] & The Economist, 9.9.2017 [https://www.economist.com/news/science-and-technology/21728614-machines-read-faces-are-coming-advances-ai-are-used-spot-signs?fsrc=scn/tw/te/bl/ed/advancesinaiareusedtospotsignsofsexuality]

[3] See: RT Deutsch, 9.9.2017 [https://de.rt.com/180n]

[4] See: The Guardian, 8.9.2017 [https://www.theguardian.com/world/2017/sep/08/ai-gay-gaydar-algorithm-facial-recognition-criticism-stanford]